Is Reciprocal Link Building Safe for SEO?

Reciprocal link building is one of those SEO topics that gets oversimplified fast.

You’ll hear one person say all link exchanges are dangerous. Another says everyone does it, so it’s fine. Neither view is useful when you’re actually responsible for rankings.

Here’s the practical answer. Reciprocal links are not inherently unsafe. Manipulative link exchange patterns are. Google’s spam policies explicitly call out excessive link exchanges and “link to me and I’ll link to you” style arrangements as part of link spam, but that is very different from two relevant sites naturally linking to each other because they genuinely reference each other’s content or work together in a real way.

If you’ve built links for real businesses, you already know this. Good sites in the same niche often overlap. They quote each other, collaborate, and identify complementary websites to co-market, cite data, and share tools. That kind of reciprocity happens naturally.

The problem starts when the pattern becomes the strategy.

TL;DR

- Safety: Reciprocal links are safe when they are editorial byproducts of real relationships, but risky when built as a systematic ranking strategy.

- Google's Stance: Algorithms (SpamBrain) devalue obvious, excessive exchanges rather than penalizing every mutual link.

- The 73% Nuance: Most top-ranking domains have reciprocal links naturally; the danger lies in the "reciprocity ratio" and topical mismatch.

- Spread Test: To maintain a natural profile, ensure exchanged links are spread across different content types, dates, and relationship contexts.

- Risk Control: Avoid automated networks and prioritize links that pass the "editorial scrutiny" test—would you keep the link if search engines didn't exist?

So in this guide, we’ll separate normal link overlap from risk-heavy exchange behavior, show what current data still says about reciprocal links, and walk through a safer way to evaluate them.

What Exactly Is Reciprocal Link Building?

A reciprocal link exists when Site A links to Site B and Site B also links back to Site A.

That can happen in two very different ways.

First, it can happen naturally. A SaaS company cites an industry study from another site. Months later, that site references the SaaS company’s template or calculator. Nobody negotiated anything. The overlap is just a byproduct of publishing useful material in the same space.

Second, it can happen intentionally. One site emails another and says, “We’ll link to you if you link to us.” That is the classic link exchange.

Those two scenarios may look similar in a backlink report, but they are not the same thing from an SEO risk standpoint.

A practical way to think about it:

If you only remember one distinction, make it this one: Google evaluates patterns, intent, and context, not just the presence of a return link.

Are Link Exchanges Still Safe for SEO?

Short version: small numbers of relevant, editorially justified reciprocal links can still be fine. Scaled or obvious exchange behavior is not safe.

That has been directionally true for years, and it still holds.

Google’s Current Stance on Link Swaps

Google’s official guidance still matters here because the language is quite clear. Its disavow documentation references manual actions tied to paid links or other link schemes that violate spam policies, and Google’s spam update documentation says spam systems, including SpamBrain, continue to target policy-violating spam patterns.

In practice, Google has long treated excessive link exchanges as a link scheme. That means the risky part is not “two sites linked to each other once.” The risky part is the deliberate pattern of trading links primarily to manipulate rankings. To avoid this, you should focus on building a profile without SEO footprints that could trigger algorithmic devaluation.

That’s why the same backlink pattern can be harmless on one site and dangerous on another.

If two cybersecurity blogs link to each other from relevant guides, that’s normal.

If a payroll software site swaps links with a casino blog, a CBD directory, and three generic “write for us” websites in the same month, that’s not normal.

Algorithmic Devaluation vs. Manual Penalties

Most bad reciprocal links do not end with a dramatic penalty email.

Usually, the first outcome is simpler and more frustrating: Google just discounts the value of those links. Its documentation on spam updates explains that sites violating spam policies can rank lower, and for link spam specifically, fixing the issue does not always lead to a visible recovery because the manipulative signals may simply stop counting.

That is why so many link exchange campaigns feel like they “worked for a bit” and then stopped moving anything. The links may still exist. They just are not carrying the weight the site owner expected.

Manual actions are the more serious version. Google’s disavow documentation says the tool is mainly for sites that have a considerable number of spammy, artificial, or low-quality links that caused, or are likely to cause, a manual action. Google also says most sites do not need to use the tool because it can already assess which links to trust.

So the practical hierarchy looks like this:

- Best case: the reciprocal link is relevant and editorial, so it helps or at least fits naturally.

- Common bad case: the link is manipulative, so Google devalues it.

- Worst case: the site builds enough artificial link patterns to trigger a manual action.

If you are building reciprocal links and seeing no movement, assume devaluation before assuming reward.

What the Latest Industry Data Shows

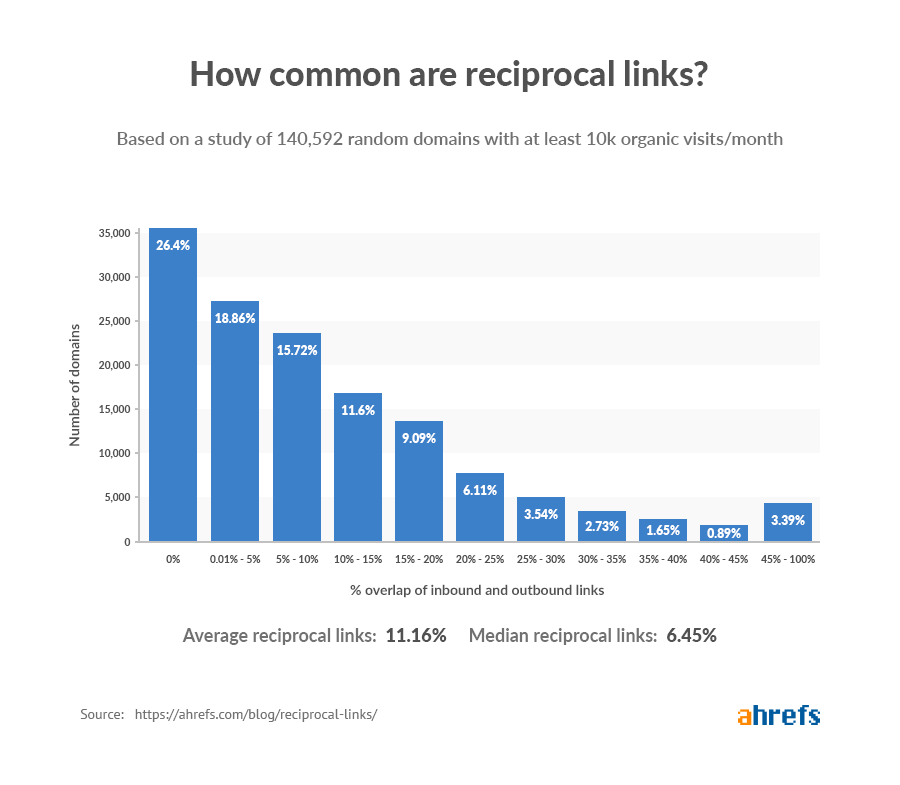

The best-known data point still comes from Ahrefs. Their large-scale study found that reciprocal links are common across the web, and a later Ahrefs stats roundup states that 73.6% of domains have some reciprocal links. Another Ahrefs finding cited widely in the industry is that roughly 4 to 5 of the top 10 ranking pages have reciprocal links.

That matters because it confirms something experienced SEOs already see in the field: reciprocity itself is normal.

But don’t misuse that data.

It does not mean you should try to manufacture reciprocal links at scale. It means that healthy sites naturally accumulate some overlap because the web is interconnected.

A better interpretation is this:

- Some reciprocal links are normal

- High percentages can still be normal in tight niches

- Pattern quality matters more than raw count

That last point is where many teams go wrong. They obsess over the percentage and ignore the footprint.

Natural Link Overlap vs. Manipulative Exchanges

This is the line that matters most.

The same backlink tool can show two sites linking both ways, but one relationship is completely harmless and the other is an obvious scheme.

Why Organic Reciprocity Is Completely Normal

Organic reciprocity happens when two sites operate in the same topic cluster long enough that overlap becomes unavoidable.

Common examples:

- A CRM blog cites a sales benchmark report from a revenue operations site

- That revenue operations site later links to the CRM’s pipeline template

- A legal SaaS partner page links to an integration company

- The integration company links back to a documented case study

- A niche publisher includes your original research

- You later cite their expert commentary in a roundup

None of that is suspicious on its own.

In fact, if your site has zero reciprocity after years of outreach, partnerships, podcast appearances, webinars, and co-marketing, that can be a sign your link profile is oddly artificial in the opposite direction.

Healthy sites usually have some overlap because real businesses have relationships.

Understanding the Reciprocity Ratio

You do not need a magic universal percentage. You need a decision rule.

A simple working formula is:

Reciprocity ratio = linking root domains that also link back to you / total referring domains

This is not a Google metric. It is just a practical audit lens.

Use it like this:

- Low ratio + strong relevance usually means no concern

- Moderate ratio + obvious partnerships can still be fine

- High ratio + repeated outreach footprints deserves review

- High ratio + weak relevance + optimized anchors is a red flag

What counts as “high”? There is no official threshold, and anyone giving you a universal safe number is guessing. The better way is to benchmark your niche and check the pattern manually.

For example:

- A B2B SaaS site with 12% reciprocity, mostly from integrations, podcasts, and industry partners, may be perfectly clean

- An affiliate site with 28% reciprocity concentrated in guest post farms and resource pages probably is not

- A local services site with 8 reciprocal links from chambers, suppliers, and local associations is normal

- The same local site with 80 cross-linked “SEO partner” pages is asking for trouble

A good intermediate-level heuristic is this short checklist:

- Are the reciprocal domains topically close?

- Would the links still make sense if Google did not exist?

- Is anchor text natural?

- Did the overlap happen naturally over time, or in batches?

If the answers are mostly no, the ratio is the least of your problems.

Major SEO Risks and Red Flags to Avoid

Most reciprocal link problems are not caused by the concept. They are caused by bad execution.

Partnering With Unrelated or Low-Quality Domains

Topical mismatch is the fastest way to make a link exchange look fake.

If you run a fintech blog and swap links with a pet care site, a generic coupon domain, and a low-traffic marketing blog that publishes on everything, that footprint is hard to defend.

This is where teams rationalize bad decisions with metrics. They see Domain Rating, ignore relevance, and take the deal.

That is backward.

When I audit risky exchange profiles, the common issue is not just low quality. It is low relevance plus no real editorial reason for the link to exist.

Use this vetting rule before any partnership:

- Same niche or adjacent niche

- Real traffic trend, not just inflated authority metrics

- Consistent publishing history

- No obvious “link pages” stuffed with outbound placements

- No spun content, parasite content, or random topic sprawl

If you want a faster first-pass filter, tools that surface niche relevance, authority, traffic patterns, and spam signals can save time. This is especially useful when trying to find quality link partners that can send real referral visitors to your site. That is one place a workflow like Rankchase can be useful, because it helps narrow the pool before you manually review partner fit.

High-Volume and Obvious Exchange Patterns

A handful of reciprocal links rarely creates a footprint problem.

A campaign built around “send 200 exchange emails this month” absolutely can.

Patterns that stand out:

- Reciprocal links acquired in bursts

- Same-page placements across many domains

- Repeated anchor text formulas

- Links stuffed into author bios, footers, or generic resources pages

- Multiple exchanged links pointing to commercial pages with exact-match anchors

This is where devaluation usually happens first. The links exist, but they stop behaving like assets.

A quick test I use is the spread test.

If your exchanged links are spread across:

- different content types,

- different dates,

- different page intents,

- and different relationship contexts,

they are more likely to look natural.

If they all land on “best X software” or “money page” URLs in the same quarter, they look engineered.

Relying on Automated Link Building Networks

This is where reciprocal link building crosses into obvious risk.

Any network that promises bulk exchanges, guaranteed placements, or one-click swaps across a huge inventory of sites is drifting toward the exact pattern Google’s spam systems are designed to neutralize. Google says its automated spam systems, including SpamBrain, are continually improved to catch spam, and sites affected by spam updates should review compliance with spam policies.

The issue is not automation by itself. The issue is automation used to scale low-discretion link manipulation.

If the workflow removes editorial judgment, relevance checks, and placement standards, quality will collapse fast.

A safer system uses automation for discovery and filtering, then applies human review before any collaboration goes live.

When Do Reciprocal Links Actually Make Sense?

This is the part people skip.

Reciprocal links can make sense. They just need a real business reason behind them.

Genuine Collaborations With Industry Peers

The best reciprocal links usually come from relationships that already exist offline or at least outside pure SEO.

Examples that commonly make sense:

- Integration partners

- Joint webinars

- Co-authored research

- Vendor and supplier relationships

- Association memberships

- Case studies and implementation partners

- Podcast swaps where each side references the episode or resource

Notice the pattern. The link is a byproduct of the collaboration, not the product itself.

That is usually a safer place to operate because there is a clear non-SEO reason for the connection.

Providing Actual Value to the User

Before you agree to any placement, ask a brutal question:

Would this link improve the page for a real reader?

If not, don’t force it.

A link exchange becomes risky when it inserts a URL that does nothing except satisfy the trade. Readers can feel that, and Google’s systems are increasingly built to identify pages that exist for ranking manipulation rather than usefulness.

A strong reciprocal placement usually does one of these jobs:

- cites original data

- sends users to a deeper explanation

- points to a useful template or tool

- helps users compare options

- documents a partner relationship clearly

If the link does none of those, it is probably decorative SEO.

Maintaining Strict Topical Relevance

Topical relevance is your safety rail. Understanding topical relevance ensures that your links remain defensible and valuable to readers.

You do not need the other site to be identical to yours, but you do need a believable semantic relationship.

Here’s a practical way to score fit before agreeing to a link:

Green light

- same audience

- adjacent problem space

- similar terminology

- content overlaps naturally

- you can explain the link in one sentence

Yellow light

- broad business overlap but weak page-level relevance

- decent site quality but unclear placement context

Red light

- unrelated audience

- link placed only because authority metric looked good

- no meaningful content relationship

- page created just to host outbound links

That last one is especially common in exchange-heavy outreach. If the page exists mainly to “return the favor,” skip it.

The Truth About Three-Way (ABC) Link Exchanges

Three-way exchanges are usually pitched as the “safe” version of reciprocal links.

The structure looks cleaner on paper, and many SEOs use three-way exchanges to distribute link equity across multiple properties:

- Site A links to Site B

- Site B links to Site C

- Site C links to Site A

Because there is no direct return link between the same two domains, people assume Google cannot or does not treat it as an exchange pattern.

That is not a good assumption.

If the intent is still to trade links for ranking benefit, you are still in link scheme territory. The footprint may be less obvious than a straight swap, but the underlying behavior has not changed. Google’s concern is manipulative linking patterns, not whether the exchange is disguised with one extra hop. Its spam systems are built to detect patterns, and manual actions can apply when artificial linking becomes significant.

In practice, ABC exchanges become risky when they are repeated across the same cluster of sites, use similar anchors, or point mostly at commercial URLs.

A small number of triangle-style relationships can occur naturally in real partnerships. For example, a software company links to an agency partner, the agency links to a client case study, and the client links back to the software stack they use. This is a common way to structure ABC link exchanges correctly without creating a direct reciprocal footprint.

But if your process doc literally has an “ABC exchange” column, you are not doing editorial SEO anymore. You are engineering a footprint.

How to Audit Your Site for Risky Reciprocal Links

If you suspect your site has too many questionable exchanges, don’t start disavowing everything in a panic.

Audit first. Clean second.

Using SEO Tools to Spot Unhealthy Link Overlap

Start by exporting:

- referring domains

- linked pages

- anchor text

- first seen date

- backlink type or placement notes if your tool provides them

Then isolate domains that appear in both directions.

From there, review them through four lenses:

1. Relevance

Does the other domain actually belong in your topical neighborhood?

2. Placement quality

Is the link in a real article, a useful partner page, a case study, or a garbage resource page?

3. Concentration

Are lots of reciprocal links pointing to the same money pages?

4. Timing

Did many of these appear within the same short campaign window?

A mini-workflow that works well:

- Export your backlinks from Google Search Console and one commercial tool

- Cross-match against domains you link out to

- Sort by number of exchanged links per domain

- Manually review the top 20 to 50 overlaps

- Label each one: keep, revise, remove, or monitor

This manual pass tells you more than any “toxic score” by itself.

Also remember that Google says most sites do not need to use the disavow tool because it can generally assess which links to trust. Use tool scores as prompts for review, not as automatic verdicts.

How to Rebalance Your Link Profile Safely

If you find risky overlap, fix it in order of impact.

Start with the worst offenders:

- unrelated sites

- obvious exchange pages

- exact-match anchor swaps

- sitewide or footer links

- clusters built during a single outreach push

Then decide the right action:

Google’s official guidance says disavow is for cases where you have a considerable number of spammy, artificial, or low-quality links and the links caused, or are likely to cause, a manual action. It also recommends trying to remove bad links first.

So the safe order is:

- stop creating more risky exchanges

- remove or edit the worst ones

- diversify with better earned links

- use disavow only when the profile truly warrants it

That is how you rebalance without overcorrecting.

Safer Link Building Alternatives That Work Today

If you want authority growth without leaning too hard on reciprocal deals, there are better options.

Digital PR and Earned Media

Digital PR still works because it creates links people do not need to “pay back.”

Original data, expert commentary, trend reports, and news-reactive assets can still earn strong links when the angle is real. These links are usually safer because the editorial reason is obvious.

A good simple workflow:

- pull one internal dataset or trend

- turn it into a clear claim with supporting numbers

- package it into a page with charts and methodology

- pitch only the journalists or publishers who actually cover that topic

If the asset is strong, you get citations without needing to negotiate return links.

Developing Highly Linkable Content Assets

Most sites chase links with average blog posts and then wonder why exchanges feel easier.

Instead, build assets people naturally cite:

- original studies

- benchmark reports

- calculators

- templates

- glossaries for niche terms

- comparison frameworks

- curated statistics pages that are updated carefully

These work because they solve a citation problem.

When someone needs to support a claim, they can link to your asset without any arrangement. That gives you a cleaner backlink profile and reduces the temptation to trade links just to hit growth targets.

Targeted Niche Edits and Outreach

Good outreach still works when it is selective.

The safest version is not blasting templates. It is identifying pages where your resource genuinely improves the content and then making a tight, relevant ask.

That means:

- no generic “I noticed your article”

- no authority-first targeting with weak topical match

- no asking for links to pages that are not clearly useful

- no reciprocal condition attached to the pitch

If you do want to build relationships in the same niche for future collaborations, keep that separate from the outreach itself. First build relevance. Then let links happen where they make editorial sense.

Frequently Asked Questions

Will Google penalize my site for a few reciprocal links?

Usually, no.

A few reciprocal links between relevant sites are common and normal across the web. The risk rises when the links are part of a manipulative pattern, especially if they are excessive, low-quality, or clearly exchanged for ranking gain. Google’s documentation also makes clear that most bad links are handled algorithmically, while manual-action-level intervention is more associated with substantial artificial link problems.

What percentage of reciprocal links is considered safe?

There is no official safe percentage.

Anyone giving you a fixed threshold is overselling certainty. Use context instead. Ahrefs data shows reciprocal links are widespread across the web, so the presence of some overlap is not a danger signal by itself.

A more useful rule is this:

- low to moderate overlap from relevant sites is usually fine

- high overlap from unrelated or obviously exchanged placements is not

Focus on quality, relevance, placement, and acquisition pattern before you focus on ratio.

Should I accept link exchange requests via email?

Most cold exchange requests should be filtered hard.

A good request has:

- strong topical fit

- a real page suggestion

- a clear reason the link helps users

- no pressure for a direct swap on the spot

A bad request usually mentions DR first, relevance second, and users not at all.

If you do consider one, run this quick screen:

- Is the site genuinely in your niche?

- Is the proposed page real and useful?

- Would you still want the link if they never linked back?

- Does the placement improve your page for readers?

If two or more answers are no, decline it.

That one habit will save you from most reciprocal link problems.